FABIAN OFFERT

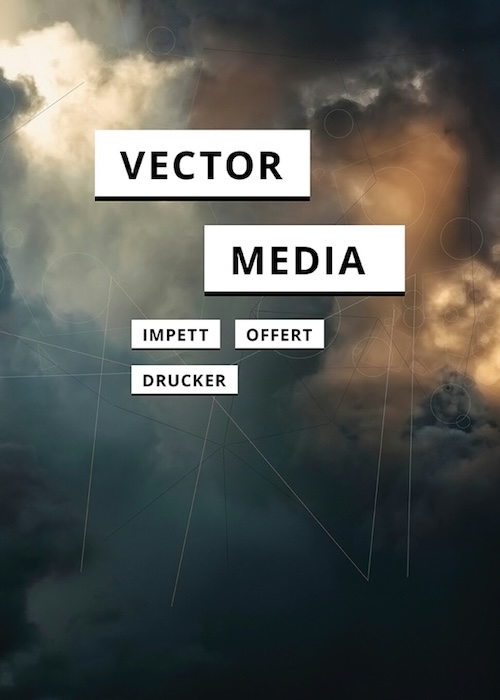

I am Assistant Professor for the History and Theory of the Digital Humanities, Director of the Center for the Humanities and Machine Learning, and Principal Investigator of the AI Forensics Project at the University of California, Santa Barbara. My research focuses on the epistemology, aesthetics, and politics of artificial intelligence: I study how machine learning models represent culture and what is at stake when they do. My most recent book, Vector Media, writes the first comprehensive history and theory of vector space as a space of universal commensurability in contemporary machine learning. You can find a full list of my publications here, or email me to get in touch.

Recent events

Jan. 8-10: AHA 2026 Chicago ● Jan. 13: University of Amsterdam, Critical AI Seminar Series ● Jan. 16: University of Texas, Austin, Humanities in the Age of AI ● Mar. 9-11: New York University, Cultural AI: An Emerging Field ● Mar. 19-20: Bibliotheca Hertziana, Limits, Opacity, and the Unknown Across Art, Science, and Media ● Mar. 30: Emory University, Data and Decision Sciences Speaker Series ● Apr. 20: San Diego State University, Center for Multisensory Architectural Research ● past events